Would a separate 'Instagram for Kids' be a good idea, or would it expose youngsters to the dangers of social media, and the broader web, too early - and in Instagram's case in particular, add undue mental pressure, and facilitate cyberbullying, among increasingly younger and more susceptible users?

Clearly, many experts and regulators are leaning towards the latter, with a new call from lawmakers in the US for Facebook to halt its plans for an Instagram for Kids, noting the potential harms and dangers that could be facilitated, and amplified, by such an app.

As reported by CNBC:

"Attorneys general from 44 states and territories have urged Facebook to abandon its plans to create an Instagram service for kids under the age of 13, citing detrimental health effects of social media on kids and Facebook’s reportedly checkered past of protecting children on its platform."

The AGs letter cites various research reports which indicate that young people’s use of social media can increase "mental distress, self-injurious behavior and suicidality among youth".

"Young children are not equipped to handle the range of challenges that come with having an Instagram account. Children do not have a developed understanding of privacy. Specifically, they may not fully appreciate what content is appropriate for them to share with others, the permanency of content they post on an online platform, and who has access to what they share online."

The letter further highlights Facebook's previous failings in protecting younger users, with this specific example relating to its previous junior version of one of its apps - Messenger for Kids:

"Reports from 2019 showed that Facebook’s Messenger Kids app, intended for kids between the ages of six and 12, contained a significant design flaw that allowed children to circumvent restrictions on online interactions and join group chats with strangers that were not previously approved by the children’s parents."

Indeed, Facebook confirmed in 2019 that there was a potential issue with the group chats feature in Messenger for Kids, which was quickly resolved, and there was no evidence that it was exploited. But still, when the key focus of the app is protecting kids, and it fails to do so, in any way, Facebook is rightly going to be held accountable for such.

But then again, Messenger for Kids, overall, has remained relatively incident-free, and is now up to 7 million monthly active users, so clearly, many children and parents are seeing value in the app. That's been amplified even further during the pandemic, throughout which Messenger for Kids has facilitated connection while we've all remained physically separate.

More kids are using the app, and seeing benefit from digital connection, while it's also helping them learn the pros and cons of social media at a younger age, which is important, given the critical role it now plays in our overall interactive process.

Given this, maybe Instagram for Kids makes sense. Maybe?

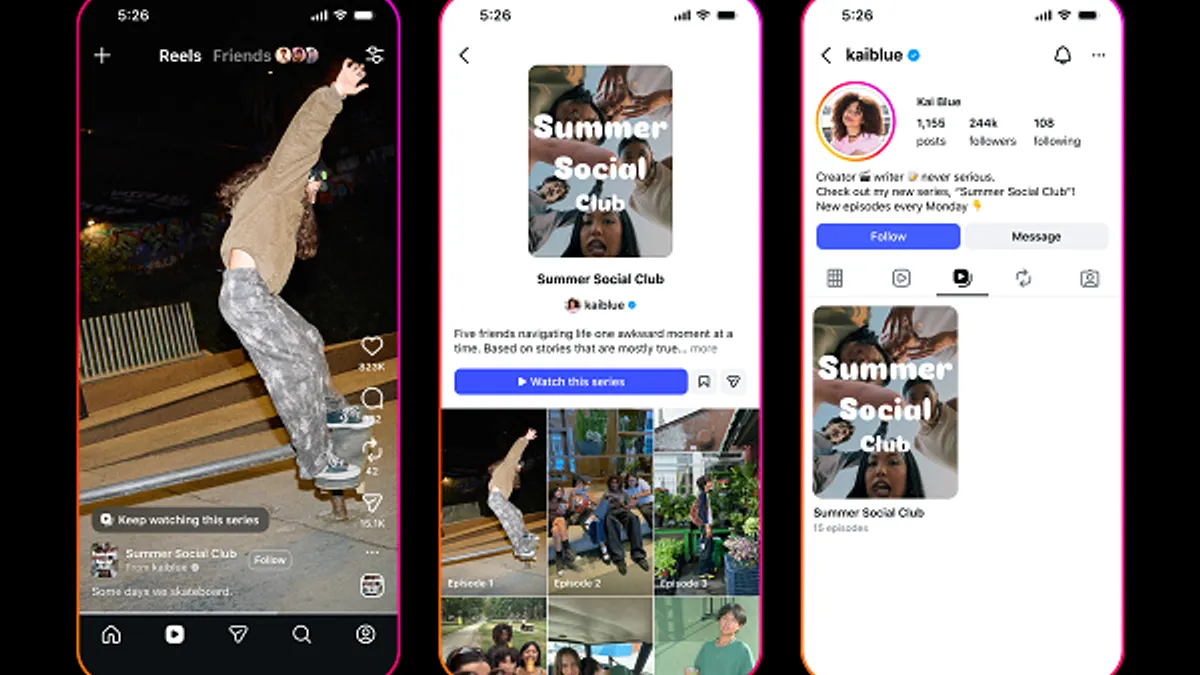

The logic of the proposal, as noted by Instagram chief Adam Mosseri, is that by providing a separate Instagram for Kids platform that will, ideally, stop younger users from seeking to join the main app instead, where the risks of unwanted exposure to the ills of the broader web are far greater.

“I have no doubt in my mind that this is a safer and better and more sustainable outcome for everyone involved.”

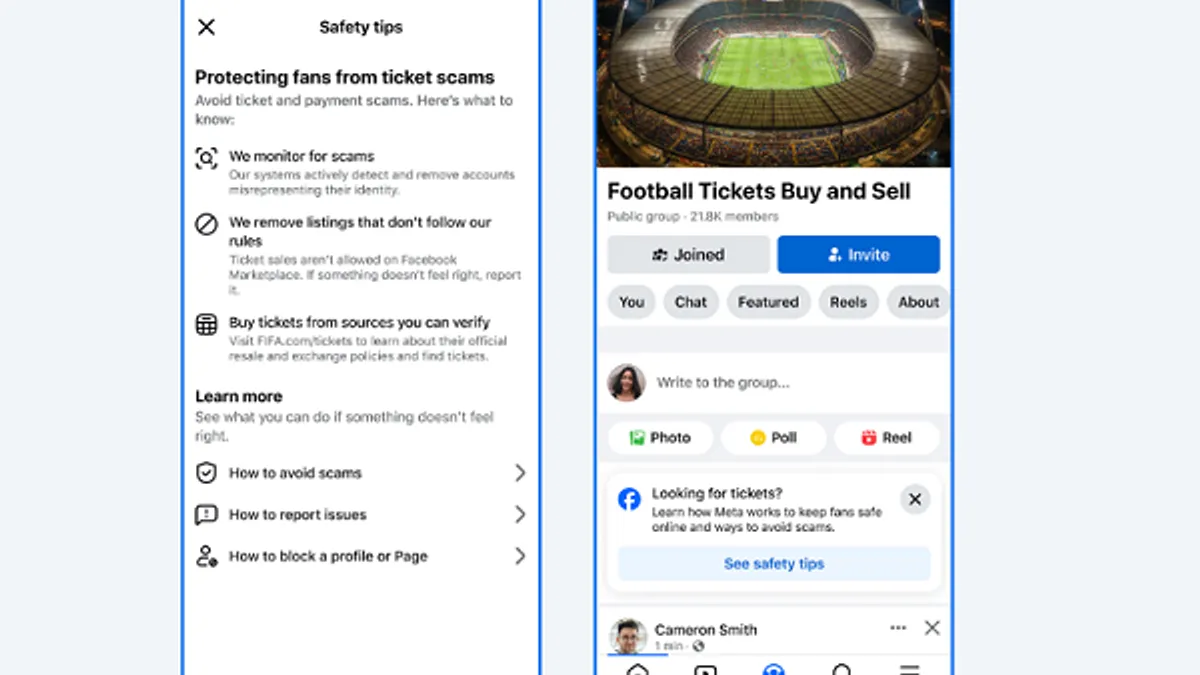

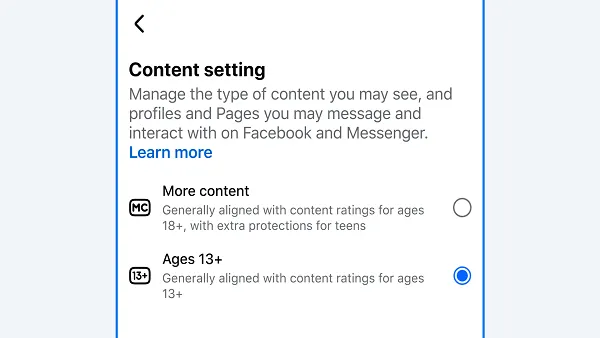

Mosseri and the Instagram team are already well along the way in developing Instagram for Kids, which will include various safeguards, while like Messenger for Kids, parents will maintain full control over who their kids can communicate and interact with in the app.

Despite ongoing criticism of the project, which also includes a recent call from an international coalition of 35 children’s and consumer groups for Facebook to halt plans for the app, Mosseri clearly believes that this is the right way forward.

“It’s our responsibility to do the right thing, even if we get slapped around a little bit.”

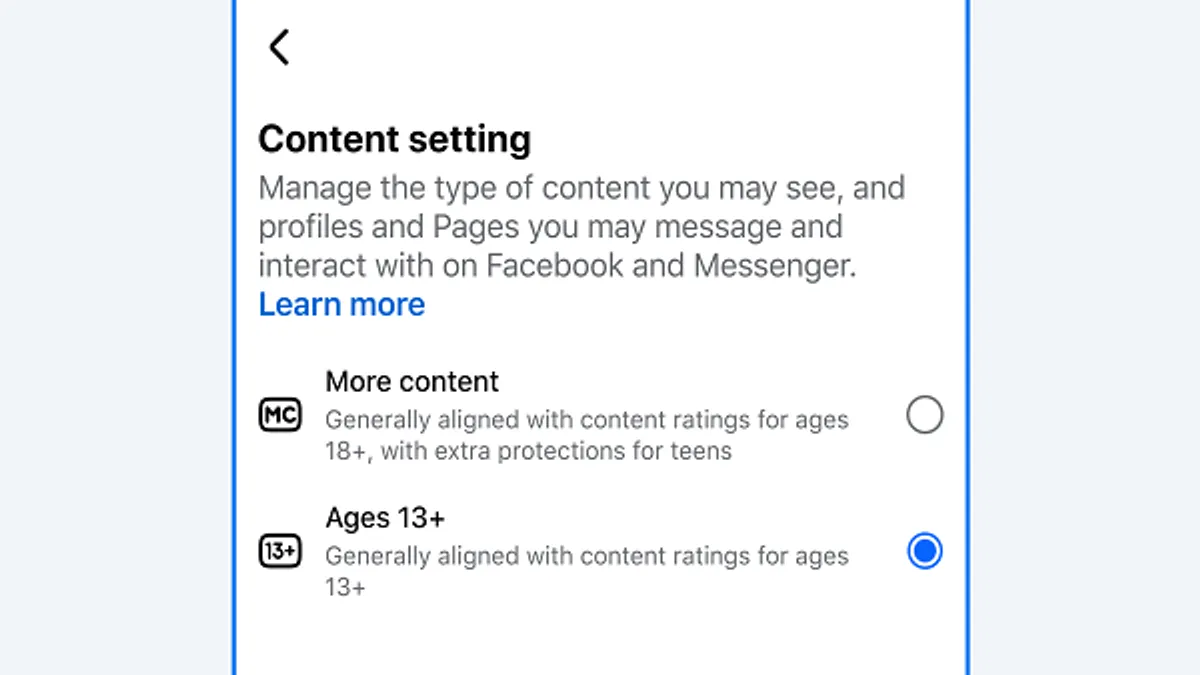

There will be no ads in an Instagram for Kids, and no data collection on kids' activities, as such. Facebook also says that it's working with experts in child development, child safety and mental health, as well as privacy advocates on the project.

So it's crossing off all the necessary boxes - but still, there's clearly significant concern around Instagram usage among minors specifically, which may prompt Facebook to put a hold on the development in order to reevaluate before taking the next steps.

Or Facebook will push ahead anyway - as Mosseri notes, the company is willing to take a PR hit, if it believes it's on the right track.

But is it?

Given the app's focus on image, and the heavily edited, airbrushed, Photoshop aesthetic that Instagram has popularized over time, which can give users an entirely skewed view of what other people actually look like, and how they compare as a result. With this in mind, is potentially adding that type of pressure to younger people a good move?

Clearly, many experts agree that it's a major concern.

Will Facebook take that on-board, or keep moving on its growth path?