It’s not just X that’s coming under scrutiny over its platforming of misinformation related to the latest conflict in Israel.

A day after issuing a public letter to X owner Elon Musk, urging him to take action to address content concerns in his app, EU Internal Markets Commissioner Thierry Breton has also made a similar request to Meta, while also threatening sanctions, and fines, in Europe if it doesn’t comply.

The #DSA is here to protect free speech against arbitrary decisions, and at the same time protect our citizens & democracies.

— Thierry Breton (@ThierryBreton) October 11, 2023

My requests to #Meta’s Mark Zuckerberg following the terrorist attacks by Hamas against Israel — and on tackling disinformation in elections in the EU ⤵️ pic.twitter.com/RnetUriRJX

As per the above letter, Breton also raises concerns about the dissemination of AI-generated deepfakes in Meta’s apps, specifically in relation to the Slovakian election, and calls for direct response from Meta on its evolving mitigation measures within 24 hours.

Which is pretty much the same language Breton used in his letter to Musk, as he looks to use the EU’s new Digital Services Act (DSA) regulations as a whip to prompt social platforms into action.

Breton hasn’t provided specific examples, at least not publicly, in either case, instead referring to third-party reports and “indications” that EU officials have been privy to, in relation to potentially rule-violating content.

Various reports have indicated that more harmful, misleading, and potentially illegal posts is reaching wider audiences through both apps, but it would seemingly serve the EU, and the platforms, better if they were to provide direct examples for each company to respond to, and outline their specific measures to address.

X owner Elon Musk even asked for such, pressing Breton for specific examples, to which Breton replied that Musk is “well aware of your users’, and authorities’, reports on fake content and glorification of violence,” putting the onus on Musk, and by extension Meta, to provide assurances based on what they’re seeing.

It’s a little different in X’s case, because of the platform’s specific leaning towards allowing more content to remain up in the app, with X’s view being that more exposure will eventually lead to more understanding, with users able to manage the level of graphic content that they’re exposed to via their personal settings.

That could allow more harmful propaganda to proliferate, while most regions also have strict laws around the promotion of terror-related content. Which is partly what Breton is referring to, but right now, a lot of the discussion is based on partial reports and insights, with the platforms themselves being the only ones who know the specifics about what’s actually happening within their apps.

But then again, an increase in user reports to external agencies will also raise concerns, which is another source that Breton would be factoring into his messaging.

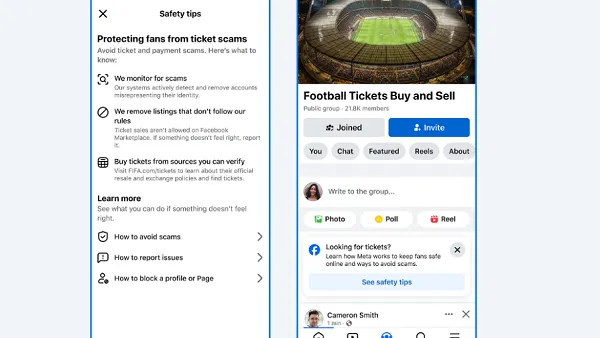

Essentially, both X and Meta are being used to share some level of propaganda, and X may be in a tougher position due to its massive cost-reduction efforts. But Meta, too, is a key distributor of public information, and as such, will be a key target for coordinated information pushes by partisan groups.

It’s the first big test for both under the new, stricter EU DSA, which could result in big fines if either platform fails to meet its requirements.

Both Meta and X say that they are doing all they can to ensure their users are well-informed on the conflict.