Given the size and influence of Facebook’s platform, its battle against political threats will likely never cease - especially now, in the wake of the 2016 US Presidential Election, which demonstrated how the platform can be used for co-ordinated attacks of this sort.

This week, Facebook has revealed that it has removed 32 Pages and account from both Facebook and Instagram because they were found to be involved in “coordinated inauthentic behavior”. Specifically, the accounts appear to have been conducting a similar campaign to Russia’s Internet Research Agency (IRA) in the lead-up to the 2016 Election, where the objective was not so much to definitively support one side of a debate, but to fuel societal division, and turn Americans against each other.

In their investigation into this new threat, here’s what Facebook has found so far:

- Facebook has identified eight Facebook Pages and 17 profiles, as well as seven Instagram accounts, as part of this coordinated group

- More than 290,000 users have followed at least one of these Pages

- More than 9,500 organic posts have been created by these accounts on Facebook, and one piece of content on Instagram

- The Pages have run about 150 ads, spending approximately $11,000 across both Facebook and Instagram

- The Pages have created 30 events, with 4,700 accounts registering their interest in attending the largest of them

It’s difficult to determine the exact motivations behind each element, but Facebook has provided examples of the types of content each Page shared, which reveals some insight.

Some of the content is clearly political and works to exacerbate existing divides:

But other posts are less clear:

The groups also, as noted, created events. As you may recall, one of the most concerning elements of the IRA interference in the 2016 Election campaign was that they organized counter-protests between opposing groups on Facebook, then sent them to the same locations, sparking confrontation.

It appears a similar approach has also been employed in this new campaign.

At this stage, Facebook says that they don’t know who is behind the push, but elements do bear similarities to the IRA’s previous efforts. But they’re getting smarter – Facebook notes that the group “went to much greater lengths to obscure their true identities” than others have in the past, underlining the ongoing challenge the platform now faces.

There are obviously many elements of concern here. Facebook has become a key source of news sharing and information, and can therefore be a key influencer in user behavior - especially for politically motivated content of this type.

Facebook may be particularly susceptible to this type of action due to Facebook’s algorithm – because the News Feed algorithm works to show you more of what you engage with, that can help further fuel divides between groups. For example, if you comment on several posts about a political issue, Facebook will show you more of the same, which will likely only help to further solidify your position on the topic, as your perception will likely be that the issues you’re upset about are significantly more prominent than they are.

Combine that with the way the algorithm determines your political leanings, then shows you more content from that side, and it definitely works to further push people towards one perspective or another - which is why groups like this would love having a tool like Facebook at their disposal.

If you need clarity on how that works, check out The Wall Street Journal’s highly cited ‘Red Feed, Blue Feed’ project to get an idea of just how polarizing the News Feed can become when its able to determine your political leaning.

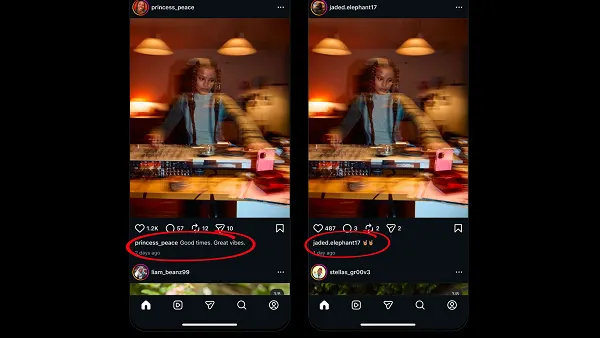

For the project, WSJ created two Facebook profiles, each following prominent Pages on opposing sides of politics. The result, as you can see, is that users end up getting entirely skewed perspectives – and because every post further underlines the position of the preceding update, you can see how Facebook can be such a polarizing political force.

And that’s before you add any additional interference from co-ordinated groups.

Facebook is working to fix this, adding in contextual links and information where they can, while separately, Twitter has also commissioned new research into how they can better break through such filter bubbles. But it’s clearly a problem, and one that groups like this will continue to exploit for gain.

The larger concern is just how prominent action like this could be. Facebook, in explaining how they detected this particular concern, notes that their team was specifically alerted because they found links between these new Pages and those previously flagged as being created by the IRA. There were more elements in their investigation than this, but the specific link highlights just how difficult it can be to determine political actors, particularly as they hide behind third-party proxys and VPNs to cover their tracks.

As noted, now that politically motivated groups know that this approach can be effective, there are probably more of them than ever targeting Facebook for such purpose.

It’s a positive that Facebook has detected this one, and is being so transparent in their efforts. But it’s also a reminder that its happening, likely at a much larger scale than you think.