Facebook CEO Mark Zuckerberg this week presented an impassioned speech about his views on the role that social media is now playing in society - and in particular, how Facebook, and other platforms, are influencing political outcomes, and if/what they should do about it.

Facebook has come under intense scrutiny in recent weeks over its decision to exempt political ads from its rules against advertising which contains “deceptive, false or misleading content”.

This came after the team for US Presidential candidate Joe Biden saw their appeal against an ad campaign run by US President Donald Trump, which included unproven claims about Biden's speculated Ukranian connections, rejected by Facebook.

In response to the appeal from the Biden campaign, Facebook explained that:

"Our approach is grounded in Facebook’s fundamental belief in free expression, respect for the democratic process, and the belief that, in mature democracies with a free press, political speech is already arguably the most scrutinized speech there is."

So Facebook, essentially, is saying that it won't be the referee in political speech, even if it's untrue. Which, in some respects, seems totally wrong, particularly when you consider the potential impact of Russian meddling via Facebook in the 2016 election. But then again, it is a very complicated area.

Is Facebook taking the right approach?

According to Zuckerberg, social platforms have actually democratized power, and given people more of a voice - so rather than seeing social platforms as polarizing, and facilitating the spread of misinformation, it's actually the opposite:

"People having the power to express themselves at scale is a new kind of force in the world — a Fifth Estate alongside the other power structures of society. People no longer have to rely on traditional gatekeepers in politics or media to make their voices heard, and that has important consequences. I understand the concerns about how tech platforms have centralized power, but I actually believe the much bigger story is how much these platforms have decentralized power by putting it directly into people’s hands. It’s part of this amazing expansion of voice through law, culture and technology."

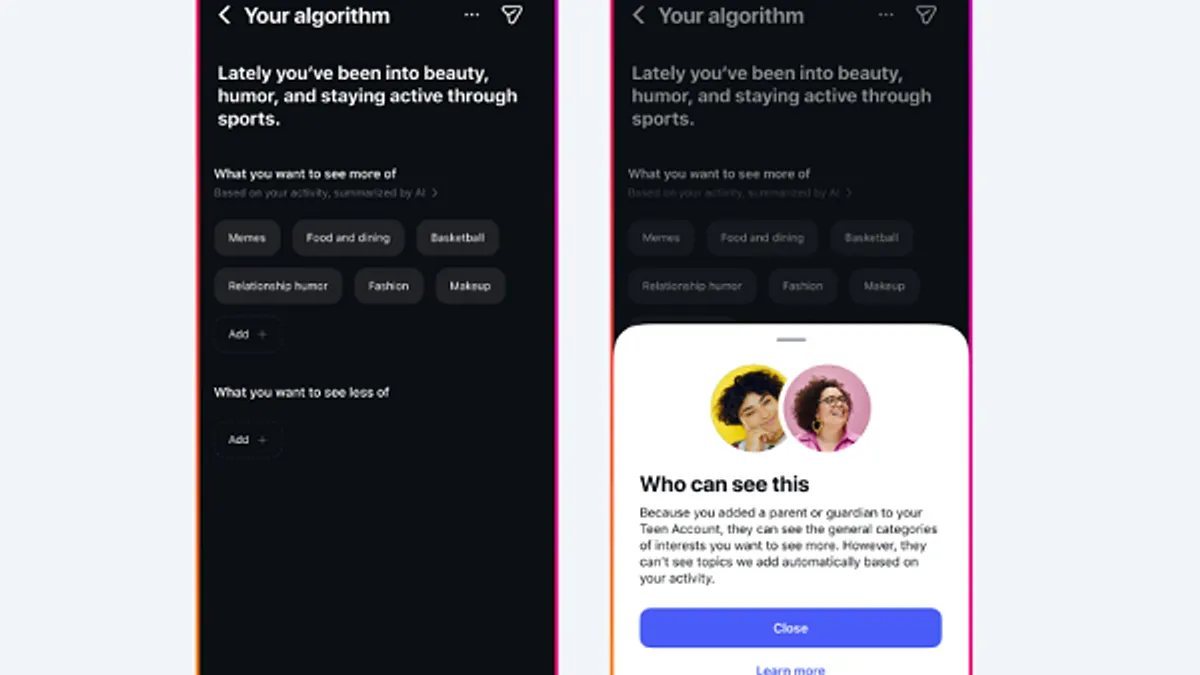

So it's less about potential manipulation, according to Zuck, and more about giving everyone a chance to be heard, and social platforms are giving them more of a say. Which may, in some ways, be true - but then again, that assumes that people are using their voice, and not just taking in the messaging, while it also sidesteps the idea that social platform algorithms, which show you more of what you like and agree with, and less of what you don't, can influence your thinking, potentially to a negative degree.

But Zuckerberg seems less interested in examining the specific impacts of content delivery, and more interested in talking up the power of social networks for information sharing. And in that, Zuckerberg doesn't believe that Facebook should necessarily intervene - unless there's a really good reason for it to do so:

"So once we’re taking this content down, the question is: where do you draw the line? Most people agree with the principles that you should be able to say things other people don’t like, but you shouldn’t be able to say things that put people in danger. The shift over the past several years is that many people would now argue that more speech is dangerous than would have before. This raises the question of exactly what counts as dangerous speech online. It’s worth examining this in detail."

Zuckerberg says that Facebook does have a responsibility to protect users from specific threats, even within political advertising. But that up till that point, there is a question of free speech and letting the people decide. And Facebook doesn't want to be the one making that call on political ads.

"We recently clarified our policies to ensure people can see primary source speech from political figures that shapes civic discourse. Political advertising is more transparent on Facebook than anywhere else — we keep all political and issue ads in an archive so everyone can scrutinize them, and no TV or print does that. We don’t fact-check political ads. We don’t do this to help politicians, but because we think people should be able to see for themselves what politicians are saying. And if content is newsworthy, we also won’t take it down even if it would otherwise conflict with many of our standards."

So Facebook has no plans to remove political ads, because it's serving a civic purpose by hosting them. Not because it makes money out of them.

Of course, political advertising is not a major revenue stream for Facebook, in broad terms.

As per TechCrunch:

"The Trump and Clinton campaigns spent only a combined $81 million on 2016 election ads, a fraction of Facebook’s $27 billion in revenue that year. And $284 million was spent in total on 2018 midterm election ads versus Facebook’s $55 billion in revenue last year, says Tech For Campaigns."

But still, Facebook does profit from them, Facebook is making money by keeping them up.

So is it really about wanting to provide more context and information to users, or is it a business decision?

It seems that Facebook falls into a gray area either way - if it allows political advertising, with no fact-checking, then Facebook potentially facilitates the spread of outright lies, which is what it's seemingly been seeking to avoid with its added election security measures and tools designed to improve the democratic process, and rid its platform of manipulation.

Facebook then sits in a gray area of political influence - is it good, is to bad? Is it to blame for political outcomes? I mean, it could have done more, right? It could have stopped the lies.

Facebook then sits in an unclear space as to what its influence is, and what should be done about it - though, of course, those questions come up in retrospect, so it's difficult to judge Facebook on such at this point.

But then again, if Facebook bans political ads which include false statements, it puts itself in a difficult position of where, exactly, it draws the line.

As noted by Instagram chief Adam Mosseri:

Instagram CEO wishes it could ban political ads, but it’s complex https://t.co/5LMDKvatog

— Josh Constine (@JoshConstine) October 17, 2019

The most obvious examples of political misinformation are easy, but once you start implementing bans, it gets ever more complicated, and the specific definitions need to be clear. And then you end up in a gray area again, where no one's exactly sure what's allowed and what isn't. And then come the questions of how Facebook, potentially, might be seeking to influence political outcomes for its own benefit through its rulings.

One of these gray areas also enables Facebook to keep making more money. The other lessens its revenue potential.

Does that mean money is the key motivator here? I don't know, but I don't feel much more confident in Facebook's stance following Zuckerberg's speech.

Zuckerberg does note that he has considered banning political ads outright:

"Given the sensitivity around political ads, I’ve considered whether we should stop allowing them altogether. From a business perspective, the controversy certainly isn’t worth the small part of our business they make up. But political ads are an important part of voice — especially for local candidates, up-and-coming challengers, and advocacy groups that may not get much media attention otherwise. Banning political ads favors incumbents and whoever the media covers."

So again, according to Zuckerberg, it's about giving everyone a voice, and giving people the power to decide.

Which sounds good, it sounds right, but as we know, social platforms can and will be used to manipulate opinions. Is that then giving people a choice? Is the free flow of information - even lies - going to facilitate a more informed electorate who will make decisions or the greater good?

This is my concern about Zuckerberg's stance on politcal ads, and freedom of expression in general. As with all things Facebook, the company tends to err on the side of optimism, and overlook the potential negatives of its stances.

Zuckerberg actually notes this himself:

"I’m a little more optimistic. I don’t think we need to lose our freedom of expression to realize how important it is. I think people understand and appreciate the voice they have now. At some fundamental level, I think most people believe in their fellow people too."

People's belief in their fellow humans is irrelevant if they don't understand them, and if social platforms are manipulating their content inputs to the point where their opinions are being informed by lies, how can they do that? How can you expect that freedom of expression, based on what we've seen in the last few years, is going to help people develop a clearer understanding of the world, and not cloud their perspectives with untrue bias, especially if you choose to effectively allow such?

There's a reason the anti-vax movement has gained momentum in recent years - and guess what, Facebook's taken action against that.

The capacity for politicians to effectively use lies in their campaigning is a problem - not one that's easily solved, granted. But also, based on real-world evidence, not one that can be simply trusted to the populous to work out on their own. No matter how you frame the benefits of free expression.