Given the expected flood of realistic looking AI-generated images coming our way, this makes a lot of sense.

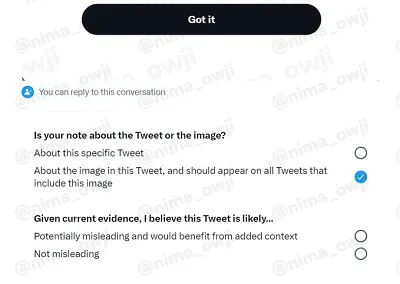

Twitter’s currently working on a new element of its Community Notes contextual info indicators which would enable users to include notes on visuals attached to tweets, and which, once applied, would be further appended to all versions of an image shared across the app.

As you can see in this example, shared by app researcher Nima Owji, soon, Community Notes contributors will be able to add specific notes about attached images, with a checkbox that they can select to apply that same note to all other instances of the same image across the app.

Which could be particularly handy for instances like this:

The boys in Brooklyn could only hope for this level of drip pic.twitter.com/MiqkcLQ8Bd

— Nikita S (@singareddynm) March 25, 2023

This image of Pope Francis in a very modern-looking coat looks real, but it isn’t – it was created in the latest version of MidJourney, a generative AI app. There have also been AI-generated images of former President Trump being arrested, and various other unreal visuals of celebrities shared via tweet, which look pretty convincing. But they’re not – and having an immediate contextual marker on each, like a Community Note, could help to quash misinformation, and potential concern, before it becomes an issue.

Community Notes has emerged as a key foundation of Elon Musk’s ‘Twitter 2.0’ push, with Musk hoping that crowdsourced fact-checking can provide an alternative means to let the people decide what should and should not be allowed in the app.

That could lessen the moderation burden on Twitter management, while also using the platform’s millions of users to detect and dispel untruths, diluting the impact of such throughout the app.

Though crowdsourcing facts does come with some risks, with some already noting that Community Notes is, at times, being used to silence dissenting opinion, by selectively fact-checking certain elements of tweets, thus raising questions about the whole post.

This has happened to us too.

— Zoë Schiffer (@ZoeSchiffer) March 10, 2023

In the Community Notes forum, Elon Musk fans have tried to discredit our Twitter reporting by saying, among other things, that I interned for Nancy Pelosi when I was in college. https://t.co/dSAtzuFc35 pic.twitter.com/DrS5ZoG2uX

There’ll likely be varying opinion on such application, but Community Notes could be weaponized against opposing viewpoints – though Twitter is working to build in additional safeguards and approval processes to tackle this.

Though I maintain that it’d be better off just including up and downvotes on tweets.

If you want to enable users to share their opinions on what should and should not be seen, giving every user a simple way to do so would be more indicative, while Community Notes can only be applied in retrospect anyway, the same as up and downvotes as a ranking factor, so it’s pretty similar in this context.

The concern would be that bot armies might weaponize downvotes - but it may also provide another means to crowdsource user input on a broader scale.

Elon would probably make it a Twitter Blue exclusive anyway, so it probably won’t help, but there are some concerns that the limited scope of Community Notes contributors could lessen its value, in a broadly indicative sense.

But the Community Notes team is improving the process, and it could be a valuable addition – and the capacity to quickly tag all instances of an image, as noted, will become more important moving forward.