Facebook has been under fire in recent weeks over its decision to exempt political ads from its fact-checking process and rules, which essentially means that politicians will be able to run ads on the world's largest social media platform and say pretty much whatever they want. That could enable candidates to amplify the reach of lies and rumors - which some are already doing - and potentially manipulate voters and influence critical votes.

Facebook CEO Mark Zuckerberg explained this in a recent public address, in which he broadly focused on the values of free speech.

As per Zuckerberg:

"We don’t fact-check political ads. We don’t do this to help politicians, but because we think people should be able to see for themselves what politicians are saying. And if content is newsworthy, we also won’t take it down even if it would otherwise conflict with many of our standards."

To clarify, under Facebook's regular rules, all ads are fact-checked, and those which are found to be spreading false claims are disallowed:

"Per our Advertising Policies, we do not allow advertisers to run ads that contain content that has been marked false, or is similar to content marked false, by third-party fact-checkers. We disapprove ads that contain content rated false, which means these ads can’t run."

So in all other instances, Facebook would ban ads found to be spreading false or misleading claims. But for political ads - within which false claims arguably have the most impact - Facebook will not check, and will not censor them, at all.

The decision seems to go against the years of work Facebook has put into cleaning up its policies after its platform was used to spread political misinformation in the lead-up to the 2016 US Presidential election - but Facebook's stance is that it cannot, and should not, be the referee in political debate.

In some ways, that does make some sense. Facebook says that if it bans certain political ads, where do you draw the line - what then qualifies as a political ad or a misleading political claim, and how can a private company be the judge of such?

Indeed, in an opinion piece from Facebook's policy directors, published in USA Today this week, Facebook says that:

"Anyone who thinks Facebook should decide which claims by politicians are acceptable might ask themselves this question: Why do you want us to have so much power?"

That, of course, overlooks the fact that Facebook already has this level of power, and by not acting, it may be enabling such influence anyway - but it does also raise the question of how, in regular ads, Facebook can be okay with using outside fact-checkers, and banning false claims outright, yet in political ads it can't do the same.

But there are still some things that Facebook can do, even if it won't remove such ads, which can have an impact, and could help to lessen the potential of its platform being used to spread untruths from candidates within political campaigns.

1. Prompt all users to check their Ad Preferences in the lead up to major election periods

This is not a fix in any way, and doesn't address the key issue, but Facebook can, and should, run a News Feed alert which prompts all users to check over their Facebook Ad Preferences, and remove anything which they don't agree with or doesn't look right.

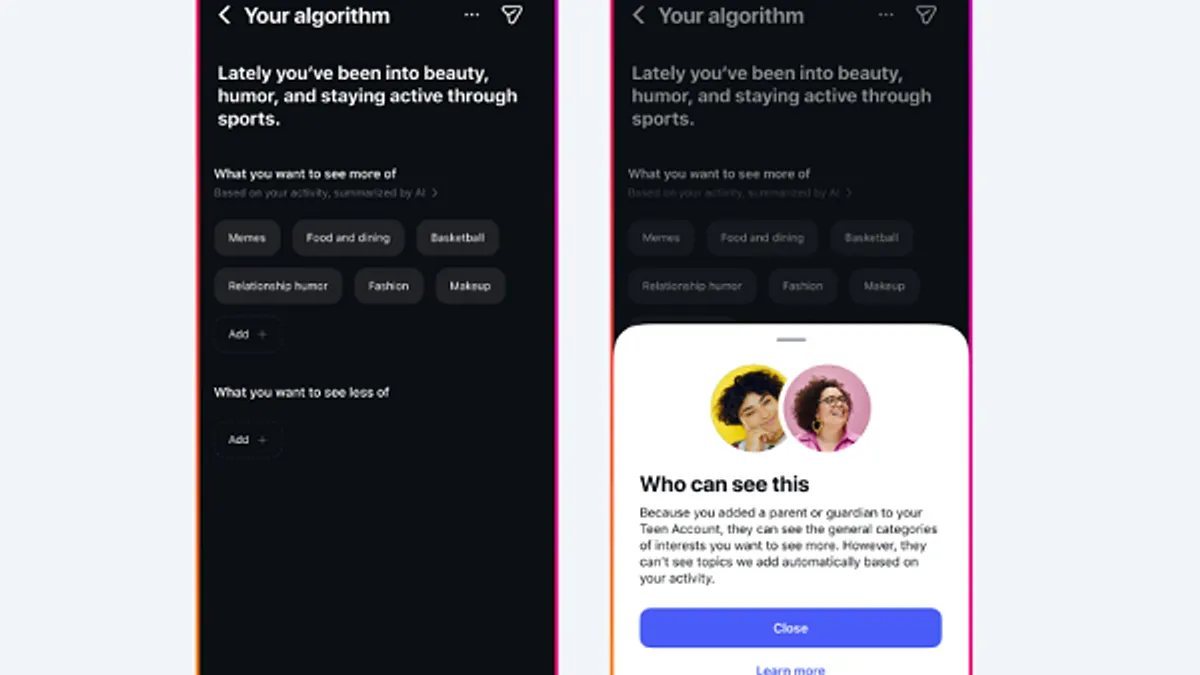

Not all users are aware of their Ad Preferences listing, which shows all the different topics and categories you can be targeted for in ad campaigns, and enables you to remove those you don't want to be pegged with.

This is how political organizations can target you, which Facebook should also explain, and you can remove elements like your relationship status, your job title, your education, so that you're not being targeted based on such.

Again, this isn't a solution, and most people probably won't bother to change their listings. But by making sure that people are aware of their options, and how they work, particularly as we head into election periods, it may help to lessen the impact of such targeting.

2. Add a 'Disputed by Third-Party Fact Checkers' tag to political ads with false claims

Okay, so Facebook won't remove political ads which include false claims, but they should still be subject to fact-checks. And even if Facebook won't stop the ads from running, it could still include a 'disputed by third-party fact-checkers' note to them, as Facebook already has done on questionable links.

It wouldn't need to include the pre-sharing alert prompt (right), but a note like the one shown in the image on the left would add some level of accountability for such, while still enabling the ads to run - and still keeping Facebook relatively 'hands off', as the fact-checking is done by, as it says, third party operators.

Facebook could even change the wording to 'Elements of this ad have been disputed by third-party fact-checkers', it could even minimize the alert prompt itself - but at least then it would still be doing something to stop candidates amplifying mud-slinging.

Facebook would also then be able to alert the candidate about the disputed tag, which could make them re-think running the ad. If it's going to be tagged, and make them look like a liar, maybe they won't want to go through with it.

This seems like a reasonable compromise which would ensure such ad content is still being checked, in some form, while also enabling Facebook to keep providing a platform for such - and playing a part in facilitating free speech.

Research released earlier this year showed that Facebook's 'disputed' tags can reduce the spread of false claims, at least to some degree, which is a key element of concern in Facebook allowing candidates to amplify such.

3. Include a prominent listing of Facebook ad spending by candidates

This is another measure which may not have a huge impact, but could help to better educate users.

Back in August, Facebook announced a range of updates to its Ad Library, including a new tracker of Facebook ad spend by major political candidates.

That's really interesting information to have, but it's kind of buried in the Ad Tracker, which, you'd assume, is not seeing significant use by the regular voting public.

But what if Facebook made that listing more prominent? What if, as part of, say, the new News tab, Facebook listed this chart, or a sampling of it, at the top of the screen, alerting people to check on each candidate's Facebook's ad spend levels in real-time.

That wouldn't change the ads each person saw, but it might help provide users with a little more understanding as to why they're seeing so many messages from one candidate or another, and give them some perspective on the promotions each is running on Facebook specifically.

If you were wondering why you were seeing way more ads about President Trump, but few about Joe Biden, this might explain why, and that could help users seek out further information on the less promoted candidates, or raise questions as to how each campaign is being run on the platform.

However you look at it, Facebook is a key platform for political campaigning, and providing more understanding of that, through available tools and data, would help to further improve digital literacy in this respect.

Really, there is no ideal, perfect solution here. Some have suggested that Facebook should ban political ads outright, which might work - but again, as Facebook says, where do you draw the line? And if Facebook isn't willing to do that, isn't willing to mark a threshold of what's acceptable, then providing better tools for educating users as to how they could be being targeted, and giving them some alert on potentially questionable claims, seems like a better compromise than doing allowing a free-for-all.