Facebook won't fact-check political ads, but it is expanding its fact-checking program on Instagram as part of its broader effort to stem the flow of misinformation.

Its the latest in a series of somewhat contradictory approaches - as explained by Instagram, it's now expanding its fact-checking process beyond the US, which will see new warning labels added to all posts, globally, which share false information, as determined by third-party experts.

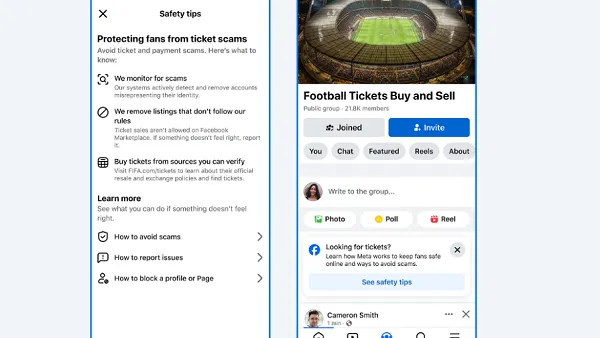

And as you can see above, Instagram's labels are actually far more intrusive than Facebook's (example below), which may help ensure that Instagram itself avoids getting drawn further into the 'fake news' debate.

As explained by Instagram:

"When content has been rated as false or partly false by a third-party fact-checker, we reduce its distribution by removing it from Explore and hashtag pages. In addition, it will be labeled so people can better decide for themselves what to read, trust, and share. When these labels are applied, they will appear to everyone around the world viewing that content – in feed, profile, stories, and direct messages."

In addition to the new warning labels, Instagram's also expanding its processes, and combining them with Facebook's fact-checking systems, to further reduce the spread of such content.

"We use image matching technology to find further instances of this content and apply the label, helping reduce the spread of misinformation. In addition, if something is rated false or partly false on Facebook, starting today we’ll automatically label identical content if it is posted on Instagram (and vice versa). The label will link out to the rating from the fact-checker and provide links to articles from credible sources that debunk the claim(s) made in the post. We make content from accounts that repeatedly receive these labels harder to find by removing it from Explore and hashtag pages."

So, Facebook's overall misinformation detection processes are getting better, and more expansive. Except in political ads. Where they're arguably most important.

Indeed, it's a confusing variance in approaches. On one hand, Facebook and Instagram should be praised for beefing up their efforts to stop the flow of false information - which, no matter how you look at it, is causing problems, particularly when you consider that so many people now get news content from Facebook.

Case in point - in Samoa, more than 70 people have died, and many more have been hospitalized due to a measles outbreak which has linked back to anti-vaccination posts shared on Facebook.

The issue highlights the need for action on misinformation - but then again, on the other hand, Facebook's willing to let political leaders lie and/or mislead voters in their campaign advertising, which can have significant consequences for ongoing policy directions, and significant implications for the future of the world more broadly.

Should misleading claims be allowed by those who stand to benefit most from such? Is that a good outcome for society overall?

Of course, there are gray areas in such process which can make enforcement difficult - if President Trump, for example, says that he's created millions of jobs, that can be seen as both true and not true, depending on how you look at it. That could, theoretically, put Facebook in the difficult position of playing political referee if it were to implement political ad fact-checking - but then again, saying that those outliers are an impediment to implementing such process at all seems like a stretch.

Regardless, it is good to see Facebook and Instagram taking more action on misinformation, and combining their approaches to stem the flow.

But Facebook's stance on political ads, specifically, will come under a lot more scrutiny yet.