While data use, and more specifically misuse, has become a major issue in recent times, there's still a general lack of understanding as to how data is collected, categorized and used by social platforms, as highlighted by this latest report from Pew Research.

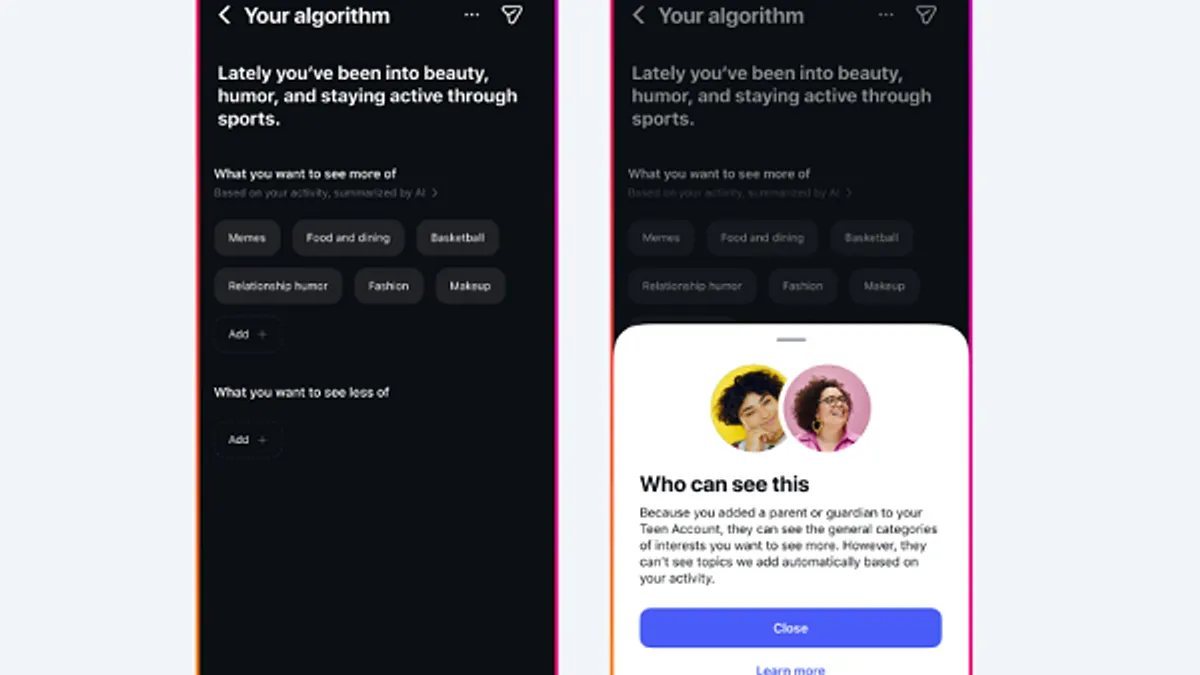

The team from Pew surveyed a thousand Facebook users in the US, in order to gauge how much they knew about what types of data Facebook collects, based on their on-platform activity, and how Facebook uses that to categorize them, in regards to ad targeting. To do this, Facebook got each participant to check their 'Ad Preferences' page on the platform, and to take a look at what topics Facebook had determined they were likely interested in.

And Facebook's tracking proved fairly accurate - according to Pew's results, the majority of participants (59%) felt that Facebook's categorizations represented them accurately.

As you can see above, participants also noted concern - 74% didn't realize Facebook categorized them like this, while 51% said they were not comfortable with such.

The findings underline the significant gap in understanding in regards to data collection and how it's used - while most users these days would have some awareness that their on-platform actions are being tracked, and used for advertising purposes (with ad retargeting being the most prominent example), most don't understand how much, exactly, is being recorded, and what can be gleaned from the same.

This is further highlighted in other aspects of Pew's report - for example, in regards to political affiliation, the majority of respondents said that Facebook's political affiliation register (where Facebook has noted such) is accurate.

That would be a concern, particularly given the way the platform has been used by politically motivated groups to sway electoral behavior. Facebook itself has previously acknowledged that its platform can be used to influence the results of elections, and the fact that Facebook can, in large part, accurately categorize your likely voting preference further highlights the potential in this regard.

Should Facebook be tracking such at all? On one hand, you might be uncomfortable with this, and be opposed to Facebook noting such behavior - but then again, if Facebook stopped displaying this information on your Ad Preferences listing, that wouldn't make it any less true. Facebook would still be able to glean such insight based on your behavior - the finding here only goes to underline the depth of data insight Facebook has, and can use however it chooses.

Further to this, in another aspect of the report, respondents indicated that it would be "relatively easy for the platforms they use to determine key traits about them", including ethnicity, political affiliation and religious beliefs.

This is really where the problem lies, and where people don't have enough context to understand the implications. Most of the Facebook data collection and usage controversies ramp up and die out fairly quickly, because there's no ongoing user angst, and subsequently, there's little to no change in user behavior.

Case in point - while Facebook has long been questioned about its data collection practices, the platform has continued to add more users every quarter, showing that despite such issues, users are not overly concerned. That trend has continued even amid reportage of Cambridge Analytica and Russian interference in the 2016 US Presidential Election.

The challenge here is in showing what this actually means, providing an explanation as to why such data collection is problematic, and how such can be misused. Most people don't care about being targeted by advertisers with ads of potentially higher relevance, based on their actions. But would you care if you knew that you were being shown content that was designed to inspire anger, to trigger stronger emotional response, and skew your understanding, or blatantly misinform you by using your emotional leanings?

Again, that's not as easy to contextualize, but this is how information warfare is playing out in the modern age. While the most recent data scandals have highlighted significant concerns, they've also shown bad actors how they can utilize the same, and you can bet that more politically motivated groups are now looking into Facebook data, and working on ways to steer the conversation in their favor.

This doesn't have to be overt, given the depth of information available, it can be increasingly subtle, hard to detect. But as again demonstrated by this report, it is entirely possible. The depth of data Facebook has on every single one of it's 2.2 billion users is significant.

Skepticism over what you see on the platform is important, but more than that, hopefully data insights like this trigger more action by relevant authorities, and the platforms themselves, to stamp out related misuse.

You can read Pew Research's full "Facebook Algorithms and Personal Data" report here.