Facebook has launched a new campaign which aims to educate users about fake news, and how they can detect misleading reports online, in order to reduce their spread.

As explained by Facebook:

"We want to give people the tools to make informed decisions about the information they see online and where it comes from. To support this effort, over the coming weeks we’ll be rolling out a new campaign in countries across EMEA to educate and inform people about how to detect potential false news."

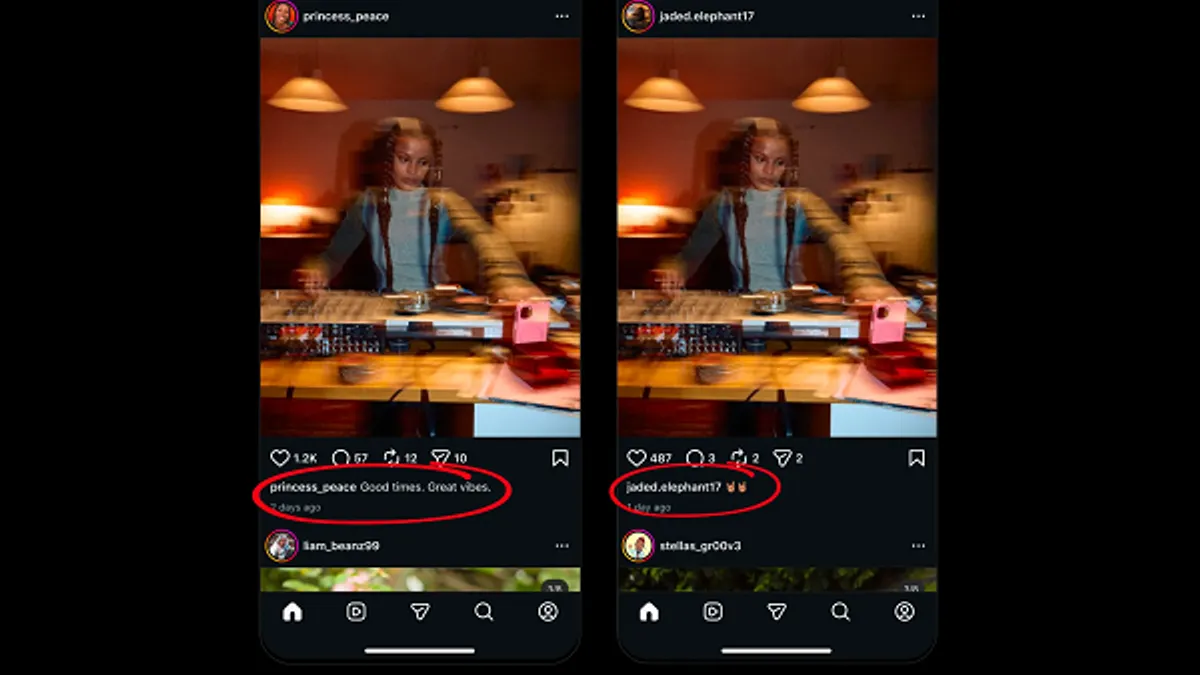

As you can see in this graphic, in consultation with its fact-checking partners, Facebook has honed its education focus in this area on to three key elements:

- Where’s it from? If there’s no source, search for one

- What’s missing? Get the whole story, not just the headline

- How does it make you feel? People who make false news try to manipulate feelings

By prompting people to consider these factors, it could help more users detect misleading reports - or at the least, to further scrutinize any claims made within such articles, which could help reduce the distribution of false information.

The last point is often the most crucial. The modern news cycle is fueled by clicks, so publishers are essentially incentivized to spark emotional response in order to maximize reach. An article titled 'The Origins of COVID-19' simply won't get as much viral traction as one titled 'China's Role in the Creation of COVID-19' - yet they could feasibly include the exact same content. One title sparks an emotional response, the other does not. The more readers take the time to question this element, the more skeptical - and likely less divisive - the subsequent debate will be.

It's also the most challenging - unfortunately, we often react based inherent bias, which is not something we can consciously control. An article that reinforces our own viewpoint will spark a stronger response than one which doesn't - and many people will also comment on posts based on the headline alone, expanding the reach of content that they haven't even read. That's why Twitter added a new pop-up warning earlier this month when people go to share a post that they haven't opened.

By prompting users to think more deeply about the content they're re-distributing, maybe, Facebook can help to limit the flow of misinformation, and help improve digital literacy overall, breaking down some of those viral conspiracy elements.

As the flood of various conspiracy theories and misinformation campaigns around COVID-19 has shown us, fake news is a major problem online, and can have major, real-world impacts when people are influenced by such.

That's why all the major social platforms have been working to remove this content in relation to coronavirus, with platforms banning 5G conspiracy theories, inaccurate health advice, cures, myths and whatever else.

And for the most part, they've done pretty well - while some of these theories have still been able to grow online, most have, at the least, seen their distribution reduced due to measures taken by the platforms.

But that then begs the question - why can't social platforms do this with all fake and misleading information? Why can't, for example climate change denial be banned, anti-vax rhetoric - why can't the social networks take the same tough stance on all forms of misinformation online as they have with COVID-19?

The truth, of course, is that elements of these discussions are not definitive. Social platforms would also prefer not to be, as Facebook has repeatedly noted, the 'arbiters of truth', they would prefer that users remain free to discuss whatever they feel online, among their friends and connections, in order to facilitate a more open forum for ideas and community.

The Utopic dream of social media as 'a global town square', where anyone can talk to anybody else, about anything, has long been the driving force behind the ambitions of the Mark Zuckerbergs and the Jack Dorseys of the world. Which is also why it's so difficult for these idealists to shift their mindset into restriction of such. Social media has never been about censorship - so how do you match your ambitions against reality, especially when it feels like you're so close to building a better, more inclusive situation?

The reality, unfortunately, is that any platform which provides mass reach and distribution also has a responsibility to ensure that such capacity is not being misused, and causing harm as a result. As Zuckerberg has stated, he has faith in people, and the greater capacity for good within humanity. But idealism and reality have never sat comfortably. Belief is one thing, but seeing the practical impact, and where that system is also failing, is also key to ensuring that such tools improve the world, as opposed to the opposite.

Maybe, through initiatives like this, Facebook can play a bigger part in helping people help themselves in this respect, while this, combined with Facebook's News tab, and algorithm updates designed to amplify credible news, can further limit the sharing of less credible, more emotion-based content, designed solely to drive clicks.

Even if that means causing greater societal harm in the process.

Facebook's new digital literacy campaign will initially roll-out to people across the EU, as well as the UK, and countries across the Middle East, Africa and Turkey.