With Facebook announcing a redesign of its app, and reiterating its new focus on privacy, both of which it showcased at its F8 conference this week, those moves are starting to look more like an entire re-brand, a completely new approach for the company, beyond just aesthetics and PR speak.

Now, Facebook has announced that a range of extremist commentators will be banned from the platform entirely under its 'Dangerous Individuals or Organizations' regulations, as outlined in its guidelines.

The impacted individuals will include:

- Louis Farrakhan - Leader of the Nation of Islam

- Alex Jones - Right-wing commentator

- Paul Nehlen - White supremacist politician

- Milo Yiannopoulos - Far-right 'entertainer'

- Paul Joseph Watson - Editor at Infowars

- Laura Loomer - Far-right activist

Even from this incomplete list, you can see how this may cause some backlash - far-right groups regularly complain that they're being silenced, and this will only stoke that anger.

But they'll have to vent about it off Facebook - according to a Facebook spokesperson, the company will not only ban the Pages of these individuals, but it will also remove any links to their sites shared by Facebook users. If a user attempts to share content from these sites on multiple occasions, they too will face bans.

Users will still be able to create their own posts praising these commentators and their perspectives (subject to the usual content guidelines), but sharing direct links to their content will be a no go.

It's a major move by Facebook, underlining the platform's renewed focus on curbing the rising amount of hate speech on its platform, which is not only causing societal division, but is also leading to increased attacks and violence in real-life. In recent months, there's been a spate of horrific incidents linked to far-right activism, including shootings in Pittsburgh, Christchurch and Poway. Facebook, which has been working with a range of third-party groups in developing its platform policies for such, has clearly deemed that it now poses a significant enough risk that it no longer wants to play any potential role or any kind, leading to this new action.

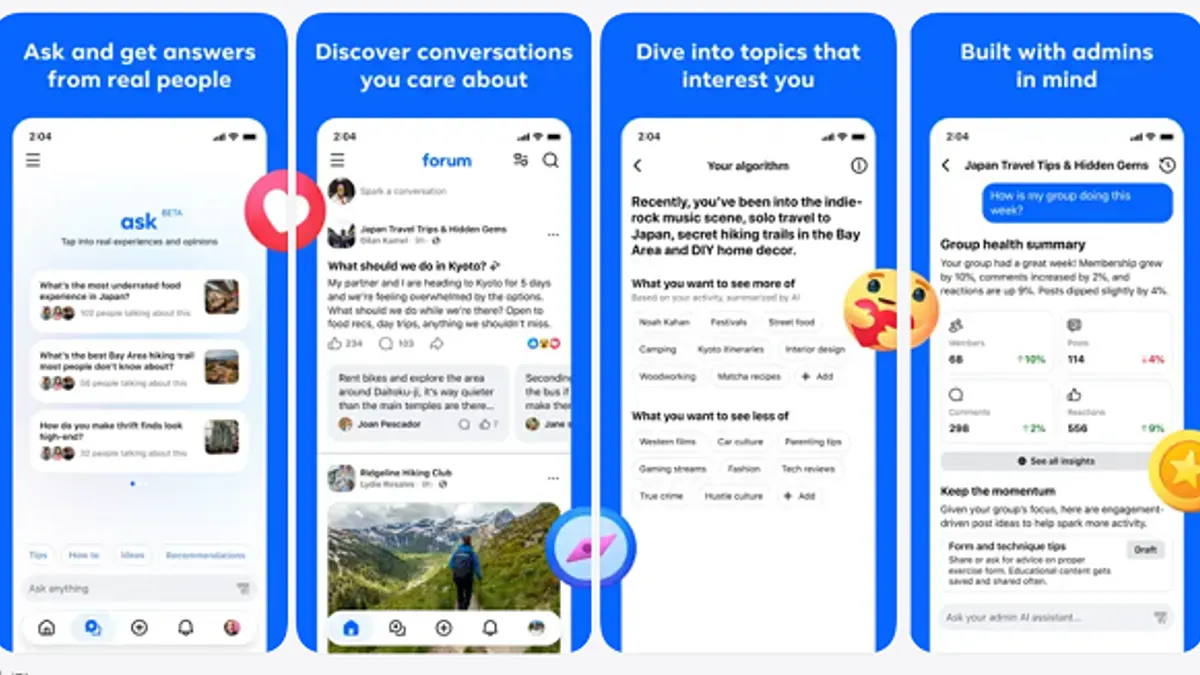

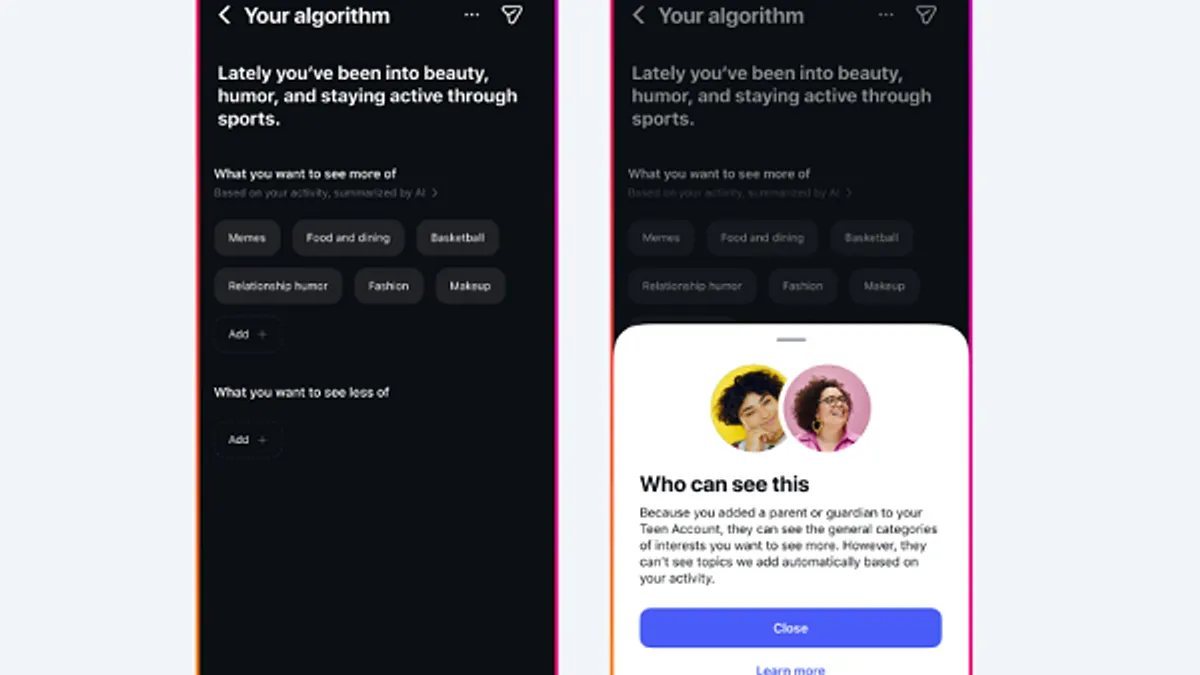

The bans will be a major blow for these activists, many of whom have built their followings by working with Facebook's algorithms to amplify their voice. For example, the News Feed algorithm will boost posts which generate a lot of discussion, and what generates more discussion? Divisive, controversial perspectives spark stronger, more emotional response, which then leads to comments, sharing - all the things that tell Facebook's algorithm that these posts will spark even more of the same if they're seen by more users.

It's a perfect example of where Facebook's optimism can bite it - the idea of the algorithm is to show people more of what they're interested in to keep them more engaged and active on the platform. But in aiming to showcase more 'interesting' discussion, it can also be used to spread questionable, speculative and argumentative topics.

Here's another example - take a look at this Google Trends chart of the rise in 'flat earth' related searches in the past five years.

That early spike in 2016 stemmed from rapper B.o.B's claims around the Earth's flatness, which he shared on Twitter - and which were quickly rebuked by the scientific community. But since then, the idea has continued to gain momentum - despite all scientific research and evidence pointing to it being incorrect.

The concept is more harmless than far-right extremism - or the equally concerning anti-vax shift - but it highlights how social platforms, in particular, are amplifying these alternate narratives. Sharing algorithms boost content which generates discussion, so a movement like this could well start off as a joke, a meme shared as an example of its absurdity, a joke from an NBA player during an interview. That may be all it needs to actually start gaining a foothold amongst more impressionable, cynical web users, who latch onto this new 'truth', even if its threads of evidence are barely holding it together.

Looking at the chart above, you can see how this 'movement' had virtually no traction before the age of social media - which is confusing in many ways because people now have more access to evidence and research than ever before. If you want to disprove something, you can, by conducting your own research online - but its almost as if the sheer volume of information available, and the access we have to such, has lead to a revolt against it.

Arguments and discussion used to center around things that we couldn't prove in the moment, but now, with smartphones at the ready, we can look up anything at any time, so it's almost as if this new shift against established theory is the next evolution of our need for interpersonal debate. "That's what the science says? Well science is often wrong - look all through history". The skepticism we used to hold onto has seemingly evolved into ignorance, and clinging to whatever beliefs you personally might choose. Social media facilitates this by providing the capacity to link up with others who'll support the same. In this sense, the negative of social connection is the same as the positive, that it connects you with more like-minded people, amplifying shared perspectives.

Given this, will Facebook's efforts to remove these spokespeople help? It definitely can't hurt. Twitter users have been calling on that platform to 'ban the Nazis' for years now, and with Facebook taking the lead, maybe we'll see increased action from all social platforms on this front. It'll probably also be a blessing for left of center platforms like 8Chan, which has become a haven for far-right discussion. The people who believe in such ideology are not going to disappear as a result of Facebook's shift, even if we can no longer see them, which will likely boost alternate platforms that are more tolerant of their extreme views.

And that's probably fine - Facebook, at 2.38 billion users, provides the greatest capacity for influence and the spread of such views. Without Facebook as a distribution platform, such commentators will have a much harder time gaining mass exposure, which could be a significant blow to the mini-empires they've created.

This is a positive move for Facebook. Right-wing groups, and no doubt right-leaning politicians, will be upset. But the potential risks far outweigh such concerns.