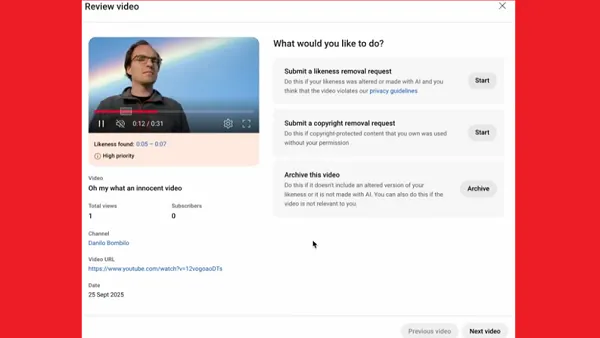

Meta is expanding its test of new notification alerts that will provide more context on post removals in its apps, and specifically, will let users know when their post has been removed via its automated detection process.

As reported by Protocol, last year, Meta launched an initial test of the updated alerts which include more context as to why a post was removed, with additional insight into whether it was human reviewed, or enforced via automated detection. That provides more context on the process involved, which could help to reduce user angst, while also enabling people to appeal on the same grounds, which could better address errors.

The alerts were formulated in response to a recommendation from the Oversight Board after Meta’s automated detection removed images that had been used in a breast cancer awareness campaign.

As explained by the Board:

“In October 2020, a user in Brazil posted a picture to Instagram with a title in Portuguese indicating that it was to raise awareness of signs of breast cancer. The image was pink, in line with "Pink October", an international campaign popular in Brazil for raising breast cancer awareness. Eight photographs within a single picture post showed breast cancer symptoms with corresponding descriptions such as "ripples", "clusters" and "wounds" underneath. Five of the photographs included visible and uncovered female nipples. The remaining three photographs included female breasts, with the nipples either out of shot or covered by a hand. The user shared no additional commentary with the post.”

Various other breast cancer related groups have raised similar concerns, with their posts and Stories being removed due to violations of Meta’s guidelines – even though its rules do state that mastectomy photos are allowed.

The Board recommended that Meta formulate a policy to provide more transparency on such, which Meta agreed to, while it also updated its systems to ensure that identical content with parallel context is not removed in future.

Of course, when you’re using automation, especially at Meta’s scale, some errors are going to occur, and in any case, the advantages of such process outweigh the false positives and mistakes, by a significant margin.

Indeed, Meta has repeatedly noted its improvements in automated detection in its regular Community Standards enforcement updates:

"Our proactive rate (the percentage of content we took action on that we found before a user reported it to us) is over 90% for 12 out of 13 policy areas on Facebook and nine out of 11 on Instagram."

Making some mistakes is a side effect of this additional protection, and no one would argue that Meta shouldn’t lean more towards caution than letting things through in this respect.

The new alerts will add another element to provide additional insight, which will hopefully help Meta improve its systems, and address errors like this in future.