Facebook and Instagram users will now have a new avenue to seek action on offensive content, under the expanded remit of its new, independent Oversight Board, which will now be able to hear appeals on content that has been left up on its platforms, as opposed to only reviewing content removals in its initial stages of operation.

As explained by Facebook:

"Starting today, people who use Facebook and Instagram now have the ability to appeal other people’s content that has been left up to the Oversight Board. Since October 2020, if content was removed from Facebook or Instagram and a user disagreed with Facebook’s re-reviewed decision to keep it down, that content was eligible for final appeal to the Oversight Board. Today’s announcement represents an expansion of the board’s initial scope. As originally contemplated by the Oversight Board’s bylaws, the board can now review Facebook’s decision to leave content on the platform - content eligible for appeal to the board still includes posts/statuses, photos, videos, comments, and shares."

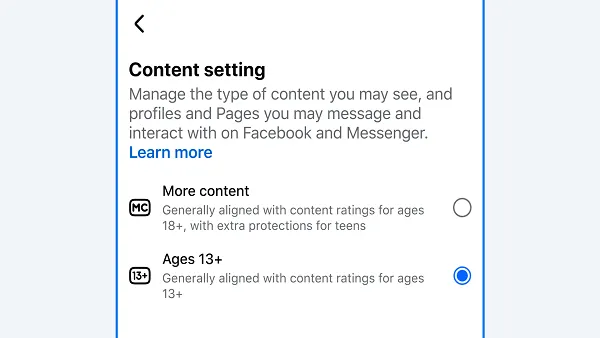

In order to call for an Oversight Board review, users will first need to report the content to Facebook through the regular process. If Facebook opts to keep the content up, despite the complaint, the reporting user will receive an Oversight Board Reference ID in their Support Inbox which can then be used to appeal Facebook’s decision to the Oversight Board.

The new system has been set-up to handle multiple complaints on a single piece of content, and to protect user privacy within the process. Facebook has also established new guidelines on informing relevant parties, and how the Board's decision will be communicated through these channels.

The expansion will open up new avenues for content decisions, which could have broader implications for Facebook's moderation and review processes moving forward.

Facebook's independent Oversight Board is the biggest project being run by any social media platform in regards to re-thinking how moderation decisions are met, and how they're enforced moving forward. Such decisions have become a key point of focus over the past few years, with many calling on the platforms to take increased action over divisive speech, particularly from political leaders.

Each platform has approached these concerns in different ways - Twitter, for example, banned political ads entirely as part of its broader effort to limit misuse of its platform for such.

Facebook has come under intense scrutiny over its handling of hate speech, and its capacity to fuel violent movements. Indeed, various reports indicated that Facebook had allowed concerning movements, like QAnon, to thrive in private groups for years before taking action.

For its part, Facebook has sought to lean more towards free and open speech, preferring to stay out of such judgments. But as we've seen, that approach, at Facebook's scale, can have dangerous consequences, which stem far beyond the platform itself.

Which is where, ideally, the Oversight Board comes in, a team of independent experts, in various fields, who are empowered to pass judgment on Facebook's moderation decisions, and even influence platform policy through their rulings.

It's still too early to tell how much impact the Oversight Board will have, but the early results have seemed promising, and it could end up being a key tool in ensuring greater equality, representation and overall fairness in Facebook's content decisions.

The expansion into hearing cases of both removal and failure to act is a significant step in this regard, and will provide further scope as to the potential of the independent body.

Now we await the Board's first major test - ruling on the platform's ban on former President Donald Trump.