YouTube announced a major expansion of its likeness detection tools, which will now allow anybody who is at risk of impersonation to upload an image of their face. That image can then be cross-checked against other uploads and alert them to potential imposters and deepfakes.

As reported by The Hollywood Reporter, YouTube’s expansion provides an additional layer of protection against misrepresentation and misuse of their image.

THR said that all actors, athletes, creators and musicians, whether they have a YouTube channel or not, can sign up to identify and request removal of deepfakes from the app.

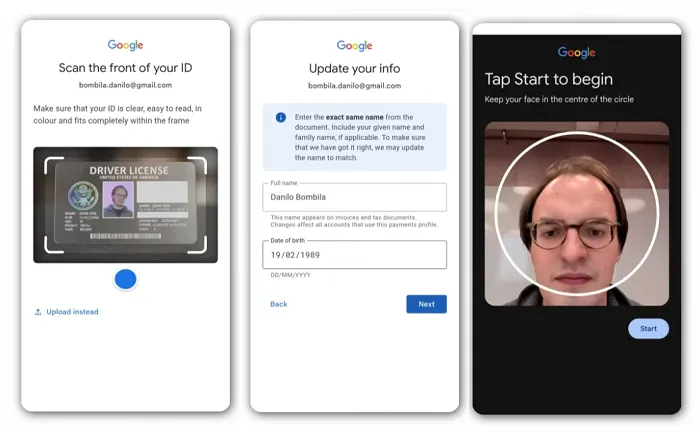

YouTube has been working on its likeness detection tools since September 2024. The tools use face scans, as well as government IDs, to reference and check uploaded content across the app.

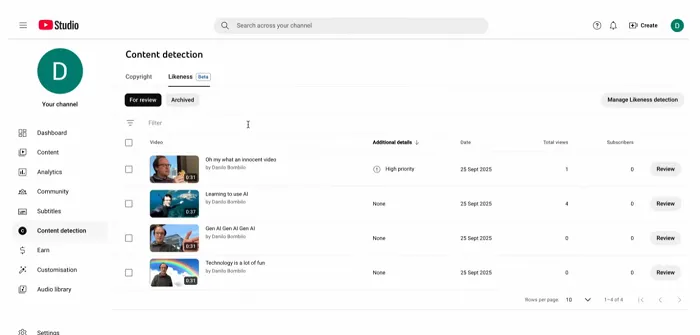

The platform can then alert users to similar visuals within uploaded content, so users can determine whether their image is being used by somebody else.

YouTube previously made this option available to selected creators, government officials, journalists and political candidates. Now, according to THR’s report, the tool is available “for those most at risk of having their livelihoods damaged by the technology.”

Which, in the age of artificial intelligence-generated deepfakes, could be a lot of people.

There have been various AI deepfake trends, from the “Pope in a Puffer Jacket” to highly accurate, fan-created depictions of potential scenes from upcoming movies. All of these use AI tools to create increasingly convincing images and videos that appear to include scenes of real people.

Many of these images and videos are benign and are purely for entertainment purposes, and it’s worth noting that YouTube will allow certain depictions within its rules, as long as they don’t violate a user’s rights.

But some images and videos pose a concern, and this expanded process will now enable more people to remain aware of, and request removal of, deepfakes and replicas that could cause harm to them or to their business interests.

Deepfakes are set to become a more significant problem over time.

YouTube said that as AI tools evolve, many more vectors for copycat work will continue to arise, which will make it harder to police false depictions and misrepresentation.

As such, this is an important first step. Expanding access will enable creators to get ahead of the curve and potentially dispel misrepresentation early as they benefit from YouTube’s evolving detection processes.